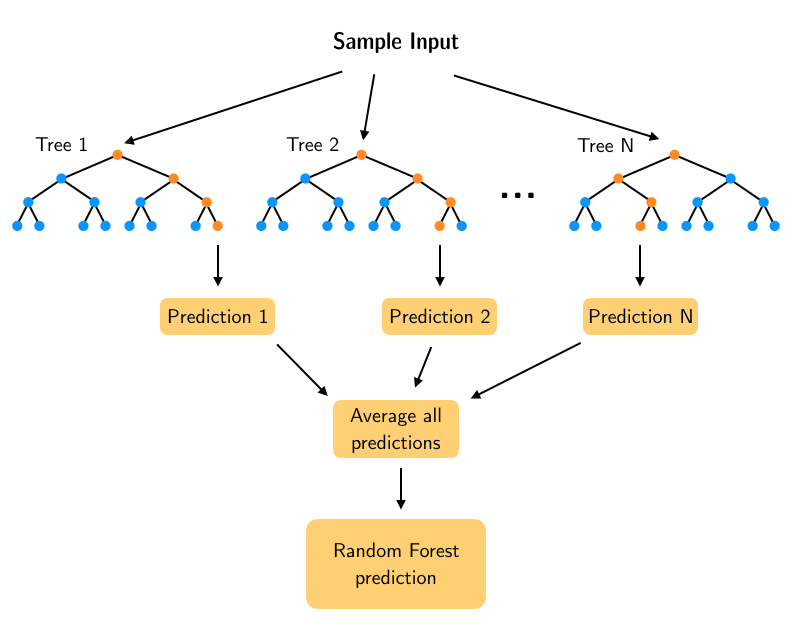

Especially when working with big data, it is very important to increase speed and accuracy by using a correct clustering method. The main contribution of this study is to increase the speed and accuracy of RF by adding a new feature selection step. It is specifically devised to operate quickly and efficiently over large datasets because of the simplification and it offers the highest prediction accuracy compared to other models in the setting of classification. Random forest (RF) has been used in biology and medicine, such as high-dimensional genetic or tissue microarray data and MIMIC-III. It is a combination of tree predictors such that each tree depends on the values of a random vector sampled independently and with the same distribution for all trees in the forest. The random forest technique is an effective and popular method to solve classification and regression problems based on decision trees. The Random Forest, Gradient Boosting and Logistic Regression results obtained with these clusters and the success of RF and CoClust working together are examined. CoClust clustering results are compared with K-means and hierarchical clustering techniques. The obtained results are compared in terms of CPU time, accuracy and ROC (receiver operating characteristic) curve. Then, random forest is repeated in the clusters obtained with CoClust. In the proposed approach, first, random forest is employed without adding the CoClust step. The first dataset is large in terms of rows referring to individual IDs, while the latter is an example of longer column length data with many variables to be considered. We work with two different large datasets, namely, the MIMIC-III Sepsis Dataset and the SMS Spam Collection Dataset. We show that it is possible to achieve a remarkable improvement in CPU times and accuracy by adding the CoClust-based feature selection step to the random forest technique. Copula-Based Clustering technique (CoClust) clusters variables with copulas according to nonlinear dependency. As the dependency structure is mostly nonlinear, making use of a tool that considers nonlinearity would be a more beneficial approach. The dependency structure between the variables is considered to be the most important criterion behind selecting the variables to be used in the algorithm during the feature selection phase. The key benefit of using random forests for dealing with missing data is the ability to make accurate predictions and classifications despite incomplete data, by leveraging the similarity of samples and refining initial guesses.The random forest algorithm could be enhanced and produce better results with a well-designed and organized feature selection phase. What is the key benefit of using random forests for dealing with missing data? Yes, random forests can accurately classify and predict even with incomplete data by using the proximity matrix to refine initial guesses about missing data. The proximity matrix in random forests is updated after running the data down each tree, and the proximity values are used to make better guesses about missing data.Ĭan random forests accurately classify and predict with incomplete data? What is the purpose of the proximity matrix in random forests? Random forests determine similarity by running data down all trees in a random forest and tracking similar samples using a proximity matrix. How does random forests determine similarity? Random forests can effectively deal with missing data by making initial guesses and refining them based on the similarity of samples, ultimately allowing for accurate classification and prediction even with incomplete data. How can random forests deal with missing data?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed